Lithe

Lithe is an object-based audio-graph framework for spatial composition and sound design.

It attempts to define a framework for spatial position processing that is independent of the rendering methods (VBAP, DBAP, Ambisonics, binaural, etc), and tries to work similarly for geometrically regular or irregular speaker layouts.

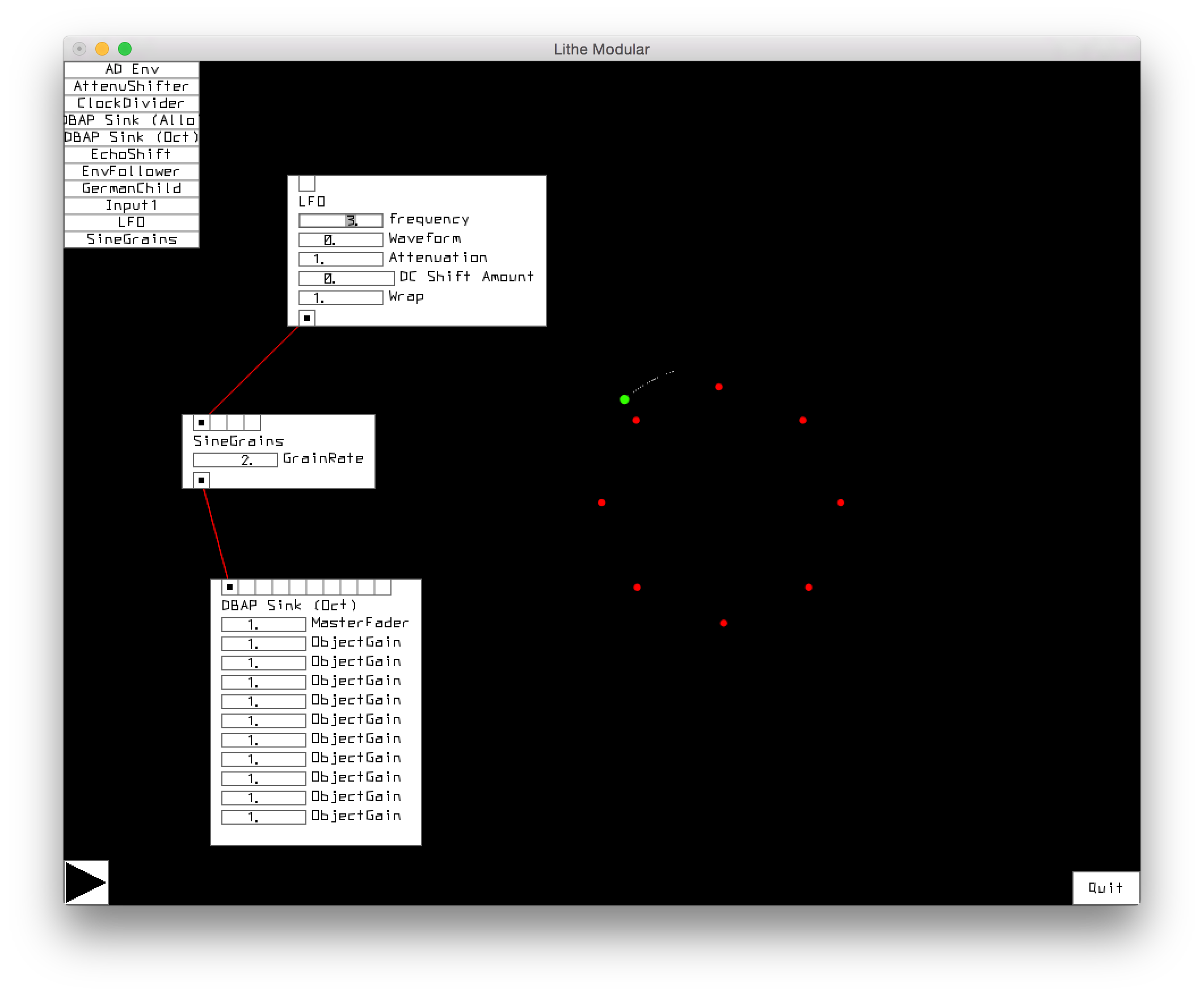

By abstracting spatial position as a continuously varying signal transmitted between nodes of the audio-graph (in addition to audio), we can allow for interconnections between notes that exert transformations on both the sound and its spatial information.

This opens up avenues for powerful trajectory generation and manipulation allowing one to define complex spatial effects that are idiomatic to audio synthesis concepts like oscillators, filters, ADSRs, envelopes, etc. It also reduces the parameter complexity in the aesthetic exploration new spatial effects, for example, Spatial Modulation Synthesis, and the ambisonics-based Spatio-temporal granulation. Further, this approach inherently allows for cross-adaptive processing between audio and trajectory signals.

Lithe was built as a C++ library, and demonstrated in two separate applications: as a proof-of-concept "spatial" modular synthesizer, and as the sound synthesis engine in the spatial sound installation HIVE.

This project was completed as a master’s project during my time at the Media, Arts and Technology graduate program at UCSB advised by Curtis Roads (chair), Andrés Cabrera, and Clarence Barlow.